For the creation of paper maps, but also for many other occasions, the data of the map is needed in other formats and applications, for which we offer a csv export.

For spam protection reasons and to avoid overloading our server, however, not everyone can do this, but only regional or theme pilots. So if you want to download data, e.g. to write to your mapped network by email, make sure you are registered as a regional or theme pilot and write us an email.

Inhalt

Order your Download

Register under kartevonmorgen.org and send us a quick email with the following info

- Who are you and in which organization/movement your are active.

- In which reagion are you active? Please send a screenshot of your are

- Do you need all entries or filtered for a specific tag?

we send you a Download Link where you can click on and get an csv-File.

csv-Format

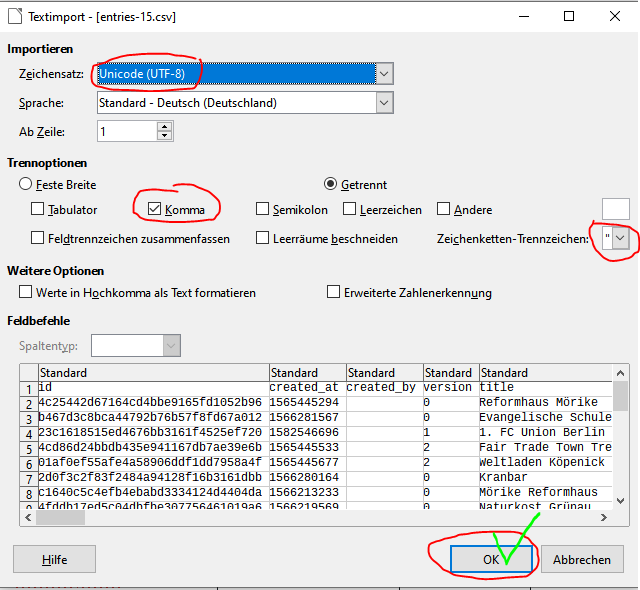

The format of the csv-file is Unicode (UTF-8) and Comma-seperated. We suggest you to use Libre Office, because Excel is missinterpreting the linebreaks within a cell.

We can prefilter the download for you. The commands have to be linked in the URL with a “&”.:

| URL-Command | Funktion |

|---|---|

| bbox=46.377,5.537,54.92,17.27 * | Regional delimitation with the 4 corner coordinates of the desired area. (Example is the area of the D-A-CH region) |

| text= | Conceptual filter for any word that must occur. %20 = space separator |

| tags= (bei Entries) tag= (bei Events) | Thematic limitation by keywords (comma separated for several keywords that must all apply.) For events, only one keyword is possible. |

| start_min=1579564800 | Time in Unixtimestamp-Format, which you can calculate here: https://www.epochconverter.com/) |

| *mandatory fields |

Example-URL where we look for refill or Leitungswasser: https://kartevonmorgen.org/api/v0/export/entries.csv?bbox=41.27780646738183,-12.1728515625,59.55659188568175,27.905273437500004&text=tag:refill%20tag:leitungswasser

| Role | created_by | email/phone |

|---|---|---|

| Guest/User (No export at all!) | ❌ | ❌ |

| Scout (without token) | ❌ | ✔️ |

| Scout (with token) | ✔️ (owned events) ❌ (other events) | ✔️ |

| Admin | ✔️ | ✔️ |

❌ = exported field is empty

Import of Data

For fear of duplicates and data rubbish, we are always concerned about automated imports. Since many people contribute to the map of tomorrow (and especially to the openfairDB behind it) and update content, it is difficult to rule out that the data to be imported does not already exist. Therefore, please contact us if an import is desired. There is an import criterion that may be required.

Upload-Format

For the import we need a table according to this example format with the following possible fields

- [id, created_at, created_by, version (is usually assigned by our database)]

- title *

- description *

- lat, lng of the Pin and/or Street, zip, city (optional: country, state) *

- homepage

- contact_name

- contact_email

- contact_phone

- opening_hours

- founded_on

- categories (Initiatives, companies, events)*

- tags (at least one)*

- license (All Data have to be open source.)

- image_url, image_link_url

- avg_rating

- Facebook, Twitter, Instagram, Telegram, WhatsApp…

The 5 * are mandatory fields for each entry. In the Categories field, you can simply enter #non-profit (initiatives) or #commercial (companies) for each entry.

Vermeidung von Dupletten

We have developed a duplicate checker (issue) that can be used during import via Rest-API to find existing, similar entries. These then usually have to be checked manually.

In the openAPI.yaml you see how it wokrs

Esiest way: https://app.swaggerhub.com/apis/Kartevonmorgen/open-fair_db_api

other, way, most updated

- go to editor.swagger.io

- go to File -> import URL

- enter https://raw.githubusercontent.com/kartevonmorgen/openfairdb/master/openapi.yaml

Or you can use our Commandline editor

No Comments Yet